Recently Intel told the world that it will begin using its new “Tri gate” 3D transistors in its upcoming 22nm processors. This got me thinking about how fast my new workstation is (with an intel Xeon processor in it, 45nm technology), and asking myself why I would need anything faster (although reduced power consumption is always attractive).

I got a new work computer last week, and it’s a huge improvement over what I was using before (ThinkStation with Windows Vista 32 Bit OS).

The workstation’s foundation is a Lenovo (formerly IBM) ThinkStation S20 series with the following specs:

- Intel Xeon W3550 Processor (3.06GHz 1066MHz 8MB L2) – 130W

- DOS (No Software Preloaded)

- Tower 5×6 Mechanical with Intel 36S Motherboard

- 2GB ECC DDR3 PC3-10600 SDRAM (1GBx2 uDIMMS)

- NVIDIA Quadro 600 (1GB Dual link DVI+DP)

- 500GB SATA 3.5″ Hard Drive – 7200 rpm

- 20-in-1 Media Card Reader

- Lenovo 16x DVD +/- RW Dual Layer (DOS)

- Intergrated Ethernet 10/100/1000

- IEEE 1394 Adapter

- Lenovo Preferred Pro USB Full Size Keyboard – US English

- Display Port – DVI Dongle

And these upgrades were purchased from newegg.com:

- 128 GB Samsung SSD Drive

- 12 GB ECC DDR3 10600 Server RAM (3×4 GB uDIMMS)

- Windows 7 Professional 64 Bit OS (System Builder Version)

RAM:

Why 12 Gigabytes if RAM? It’s not like I’m a video game developer or rendering Pixar movies, but I checked SolidWorks website for their system recommendations. For SW2011, 6GB is recommended. Then I checked out what memory configuration works best with the X58 chipset (Intel’s “workstation” chipset). Apparently, Triple Channel config works best. I’m not familiar with the 3 channel RAM thing that Intel uses for some of its processors, all the computers I’ve built have used dual channel configurations with AMD chipsets. Also, with this motherboard’s chipset & Xeon processor, I am able to use ECC (Error Correcting Code) server type memory, which is good for professional/office applications (is supposed to provide better stability; fewer lock-ups and blue screens).

So as I was looking for ECC RAM, reading that it’s good to fill all 3 slots on the motherboard (or 6 slots, as long as your RAM DIMMs are in multiples of 3), and wanting at least 6GB total, it seemed the best value I found was to buy 3 sticks of RAM (each DIMM providing 4GB of memory).

SSD:

I’ve just recently started using SSD drives in my computers because they’re getting to the point where’s the price per GB is reasonable. I went for the Samsung because of a reasonable price-point and high customer ratings. I’ve already forgotten the technical details like what controller type it is (JMicron or SandForce, etc.), and I don’t care because it’s working very well. Using an SSD as the primary drive (for the OS & important applications) is wonderful. It’s like taking a giant leap forward in computing user experience. Everything is just “snappy”.

Discrete Graphics Card:

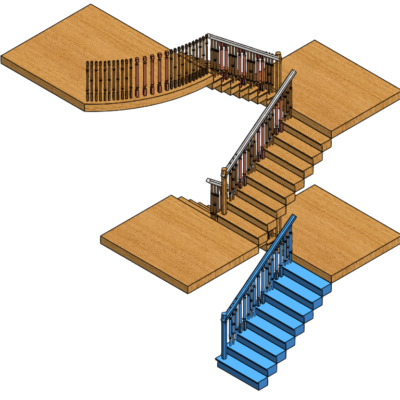

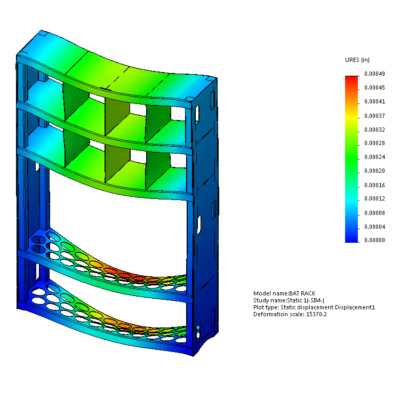

Opted for the NVIDIA 600 because it was only $40 more than the base card, and it offers the “Fermi” processing cores that I’ve been hearing so much about lately. This is still considered an “Entry Level” workstation graphics card, but I don’t need anything too powerful for the types of assemblies and models I usually work with.

Still, I’ve been somewhat disappointed so far. This card is working great (and provides excellent value), until I tile several models on my screen… This is when each model’s view acts herky-jerky, appears to be the same as another open model file, black screens, etc. This is most likely a driver issue and due to the fact that I still use SW 2009, and Dassault Systemmes doesn’t hardly support it anymore (now that it’s mid 2011). I’m sure that when I upgrade to a current version of SolidWorks, I’m going to be even happier with this card.

Windows Experience Index = 6.6:

Leave a Reply